About

Thanks for visiting my page!

I specialize in building and maintaining infrastructure for financial market interaction, with a specialization in prediction markets. I will be available for full-time roles in 2026. My ideal position is an engineering role focused on data systems.

Professional experience:

Built data pipelines, automated risk processes, and developed trader dashboards.

Designed ML models to generate and analyze volatility surfaces.

Engineered SQL workflows, automated ETL, and implemented data quality controls.

Designed pricing models, integrated dashboards with APIs, and managed database infrastructure.

Educational background:

Master’s in Financial Mathematics.

Bachelor’s in Management Information Systems and Finance.

Overview

This project is a full-stack trading platform that integrates real-time data ingestion, processing, and machine learning to make automated trading decisions.

The system is built around four core modules:

- Market Data Ingestion: Ingests real-time, tick-level data from a crypto exchange.

- Market Data Storage: Continuously writes incoming data to a local and cloud-backed database.

- Machine Learning Service: Retrains an array of machine learning models regularly to adapt to current market conditions.

- Execution Engine: Executes trades based on machine learning generated signals with integrated risk and portfolio management.

Here are some of the key results:

- High-Throughput Streaming: Processes 100k+ ticks per minute across five cryptocurrencies with sub-second latency.

- Efficient Storage: Maintains a rolling 30 day window of high-frequency data (over 500 million rows) while archiving older data to the cloud.

- Adaptive Performance: ML models quickly identify new patterns, adjust their parameters, and optimize trading strategies.

Additionally, I took away several lessons from building this:

- Designing for constant uptime: Long-running use surfaced unexpected failures, underscoring the need for proactive resource monitoring, alerting, and graceful restarts.

- Scaling from prototype to production: Early ad hoc decisions led to painful rework (such as migrating to Docker and consolidating services), so I now design with production in mind from the outset.

- Building for modular growth: A monolithic first pass was quick to ship but became a bottleneck as the system grew, reinforcing the value of clear module boundaries and interfaces early on.

Architecture

DigitalOcean hosts the server the project runs on. It is currently supported by a $48/month droplet with 8GB RAM, 4 vCPUs, and 160GB SSD storage.

Docker serves as the containerization platform. Besides the module containers, there are containers for Kafka, Clickhouse, Grafana, Prometheus, and other miscellaneous services.

Grafana powers all dashboards for real-time monitoring, visualization, and alerting.

Binance serves as the source for cryptocurrency tick-level data, which is ingested from the exchange in real-time via a WebSocket provided through Finnhub.

Kafka captures all data from the WebSocket, where a producer publishes incoming messages to a Kafka topic managed by one broker, accessed via various consumers downstream.

ClickHouse is connected to the data stream via Kafka. New data is appended to the database via batch processing, and monitoring/diagnostics measures are periodically recorded.

AWS serves as a cold storage for old data. Data older than 7 days is converted to a parquet, deleted from ClickHouse, and uploaded to an S3 bucket.

- The machine learning module retrains five models at regular intervals with the most recent set of data, using Python libraries such as scikit-learn and TensorFlow.

- The execution module loads these ML models to generate real time signals and simulate trades. This process is supported with risk and portfolio monitoring engines.

Dashboards

Portfolio performance after this project ran for around 30 days.

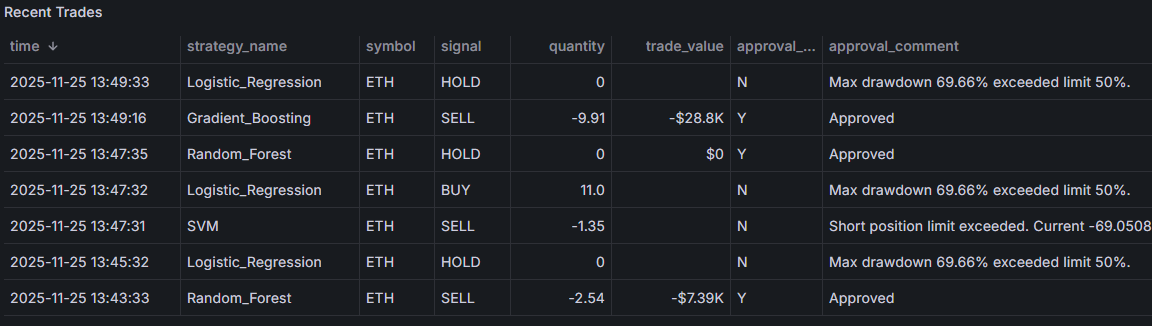

Snapshot of trade executions, along with a demonstration of the risk engine setting trade limits.

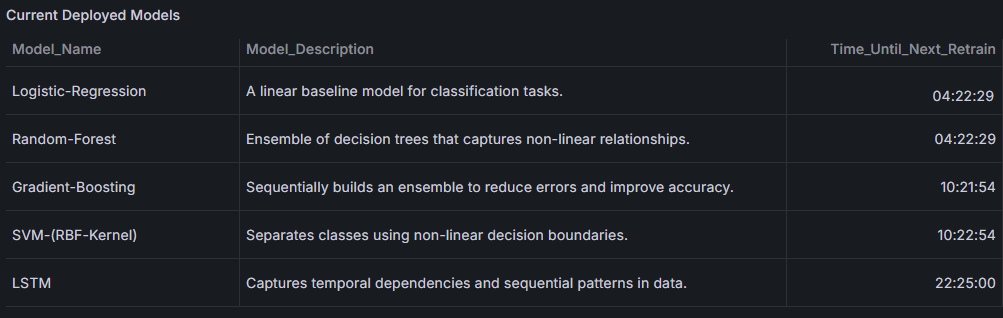

List of the different ML models that are implemented in the project.

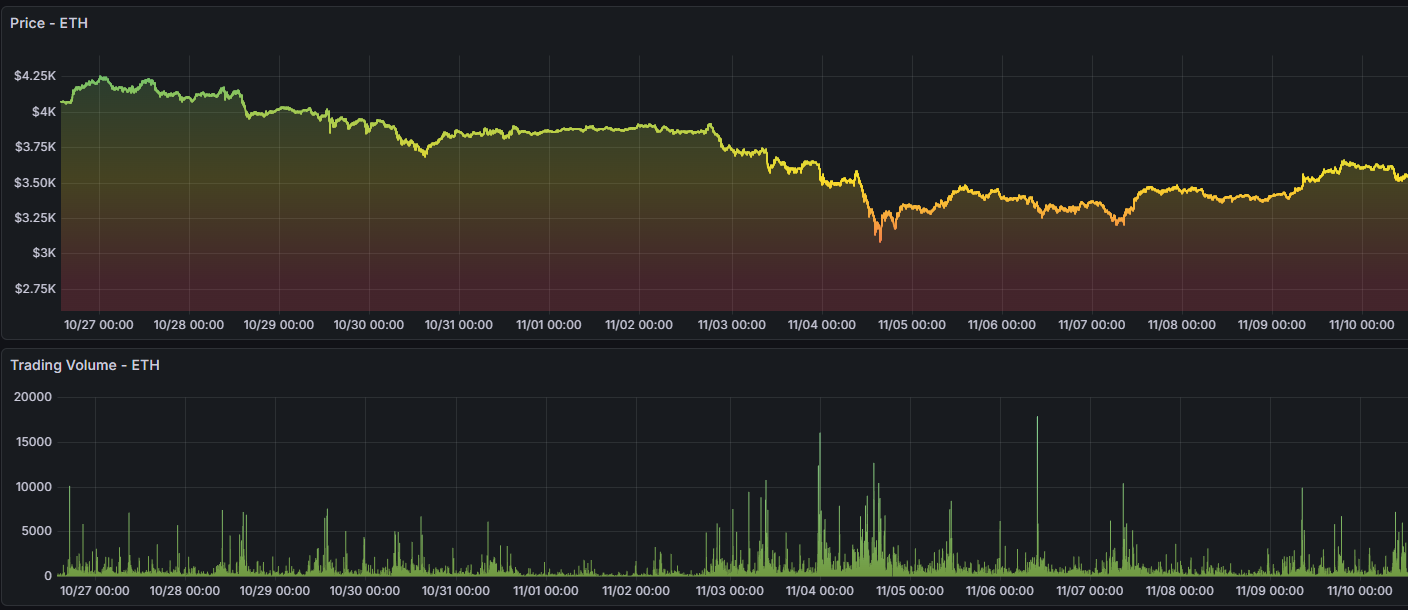

Time series of granular price ticks for ETH, one of the tickers (alongside BTC, SOL, ADA, and XRP) processed via Kafka stored in ClickHouse.

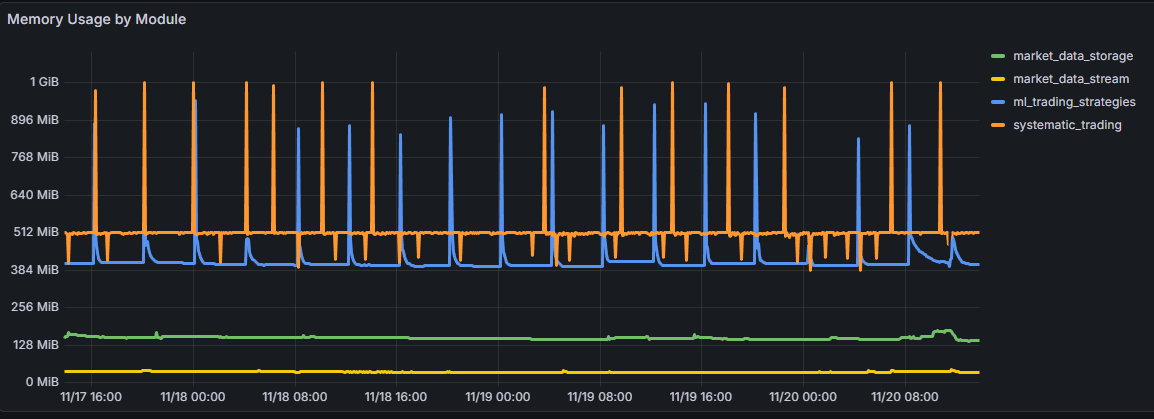

Snapshot of running memory usage by Docker container, showing that different services have varying resource demands.

Prediction Market Aggregator

Overview

Predidesk (predidesk.com) is a live prediction market aggregator that consolidates data from multiple platforms into a single, unified interface. It solves the fragmentation problem in prediction markets by providing a centralized location for discovery and analysis.

The platform is built on modern data engineering principles, focusing on reliability, scalability, and automated intelligence.

Key technical highlights include:

- Multi-Source API Ingestion: Concurrently ingests real-time data from Kalshi and Polymarket, normalizing inconsistent schemas into a unified format.

- AI-Driven Data Processing: Leverages OpenAI GPT-5 calls combined with embeddings and regex to parse unstructured market questions and metadata. Extensive prompt engineering ensures high-accuracy classification and data extraction.

- Robust Data Pipeline: Processes raw JSON payloads, cleanses the data, and stores structured records in a PostgreSQL database for efficient querying.

- Full-Stack Implementation: Features a high-performance backend serving data via FastAPI and a responsive frontend designed for seamless experiences on both mobile and desktop.

Platform Preview

The Predidesk landing page, showcasing different features of the site.

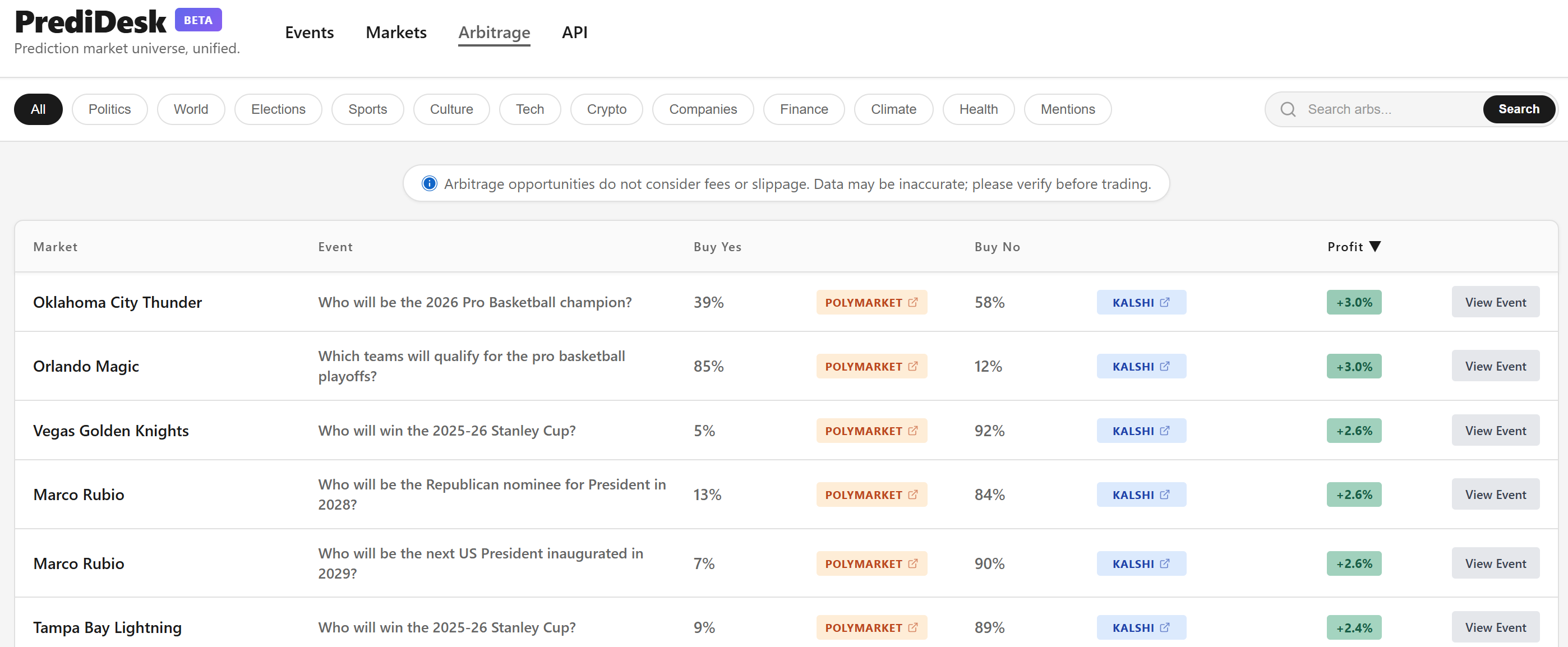

Arbitrage & Opportunities

Predidesk includes a dedicated Arbitrage Dashboard that scans for price discrepancies across platforms. By identifying inverse payout probabilities (e.g., "Buy Yes" on Polymarket vs. "Buy No" on Kalshi), the system highlights guaranteed profit opportunities.

The Arbitrage view, identifying live spreads between Polymarket and Kalshi contracts.

Writing

Check out my Substack!